News

This Viral Twitter Thread About Kids & YouTube Is A Must-Read After Logan Paul

Every technological advancement brings another challenge for parents trying to keep their kids safe, and it can be hard to keep up as the internet continues to evolve. That's why this viral Twitter thread about kids' TV is a must-read after the Logan Paul incident, because even if you think you're doing everything right, it's important to remember that not everybody has your kids' best interests at heart, and ultimately, the responsibility to shield children from disturbing content takes a village.

Paul wasn't exactly a household name before the incident, but his internet stardom was on the rise. According to USA Today, the vlogger's videos regularly pulled in more than 5 million views apiece, and last year, he made $12.5 million in ad revenue. What finally got his name trending was a video posted Sunday night depicting Paul's visit to Japan's Aokigahara, the so-called "Suicide Forest," which showed what appeared to be a body hanging from a tree. "This is a first for me," Paul can be heard remarking in the video. "This literally probably just happened." Later, according to CNN, he burst out laughing and said, "It was gonna be a joke. This was all a joke." After public backlash, Paul posted an apology video, saying, "I'm disappointed in myself and I promise to be better." But apologies aside, parents want to know how that video got published in the first place.

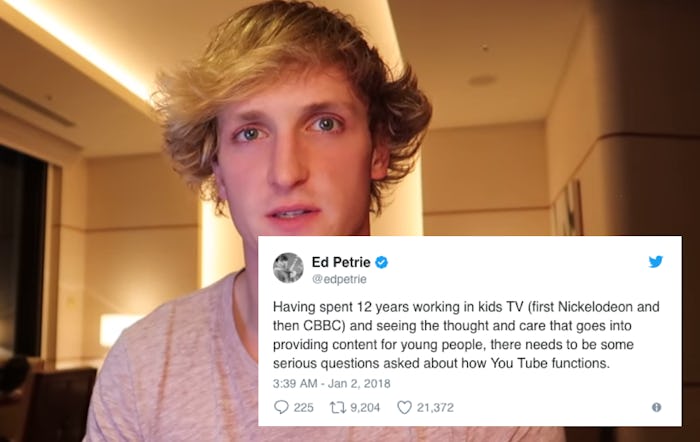

On Tuesday, British comedian, actor, and presenter Ed Petrie called out YouTube for failing to adequately screen its content before allowing users to publish it. Petrie wrote that YouTube Kids, an app that's supposedly filtered for safe content, "should vet every video before it's posted, instead of taking things down in retrospect after they get complaints. It will cost them more money. But they make A LOT of money. And with that comes responsibility." According to the YouTube Kids website, the app uses "a mix of filters, user feedback and human reviewers to keep the videos in YouTube Kids family friendly. But no system is perfect and inappropriate videos can slip through."

And in fact, a lot of inappropriate videos have slipped through. In August 2016, Today reported on a crude parody of the "Finger Family," a channel popular with preschoolers, which depicted Mickey Mouse and his family shooting each other. In November 2017, the New York Times reported on several YouTube videos that depicted disturbing sexual and/or violent scenes involving popular children's characters, and later that month, BuzzFeed News uncovered a number of "compromising, predatory, or creepy" YouTube videos featuring real children (I'll spare you the details). After BuzzFeed alerted YouTube, the videos and associated accounts were suspended, and the platform claimed that some of them had already been scheduled for removal prior to BuzzFeed's investigation.

A YouTube spokesperson provided Romper with the following statement:

Our hearts go out to the family of the person featured in the video. YouTube prohibits violent or gory content posted in a shocking, sensational or disrespectful manner. If a video is graphic, it can only remain on the site when supported by appropriate educational or documentary information and in some cases it will be age-gated. We partner with safety groups such as the National Suicide Prevention Lifeline to provide educational resources that are incorporated in our YouTube Safety Center.

YouTube also told Romper that violent content is only permitted when it's presented in an educational manner, in which case a warning screen is displayed. Videos that violate YouTube's guidelines are removed once they're flagged by users, according to the spokesperson.

But that's not enough for Petrie, who tweeted, "can you imagine the outcry if [British children's network] CBBC gave presenters a platform to show kids footage of suicide victims? But YouTube let this stuff go out and seem to think business can carry on as usual. Why are they not subject to the same rules as the rest of us?" Of course, TV is more highly regulated than the internet, both in the United States and abroad, but Petrie argues that YouTube is "such a large provider of children's content now that they should start looking at the way the rest of us do things and learn a thing or two."

Should parents pre-screen every bit of media before their children view it? In a perfect world, yes, but the world isn't perfect, and we've got laundry to fold and meals to prepare. Alphabet, the parent company of YouTube and Google, is currently worth $729 billion, according to the Guardian, thanks to ad views by parents and their kids. Seems like they can afford to hire a few more moderators, if they wanted to.